Published on Jan 09, 2026

As personal projection devices become more common they will be able to support a range of exciting and unexplored social applications. We present a novel system and method that enables playful social interactions between multiple projected characters. The prototype consists of two mobile projector-camera systems, with lightly modified existing hardware, and computer vision algorithms to support a selection of applications and example scenarios.

Our system allows participants to discover the characteristics and behaviors of other characters projected in the environment. The characters are guided by hand movements, and can respond to objects and other characters, to simulate a mixed reality of life-like entities.

The use of mobile devices for social and playful interactions is a rapidly growing field. Mobile phones now provide the high-performance computing power that is needed to support high-resolution animation via embedded graphics processors, and an always-on Internet connectivity via 3G cellular networks. Further advances in mobile projection hardware make possible the exploration of more seamless social interactions between co-located users with mobile phones. PoCoMo is a system developed in our lab to begin prototyping social scenarios enabled by collaborative mobile projections in the same physical environment.

PoCoMo is a set of mobile, handheld projector-camera systems, embedded in fully functional mobile phones. The proposed system’s goal is to enable users to interact with one another and their environment, in a natural, ad-hoc way. The phones display projected characters that align with the view of the camera on the phone, which is running software that tracks the position of the characters.

Two characters projected in the same area will quickly respond to one another in context of the features of the local environment. PoCoMo is implemented on commercially available hardware and standardized software libraries to increase the likelihood of implementation by developers and to provide example scenarios, which advocate for projector-enabled mobile phones. Multiple phone manufacturers have experimental models with projection capabilities, but to our knowledge none of them have placed the camera in line of sight of the projection area. We expect models that support this capability for AR purposes to become available in the near future.

PoCoMo makes use of a standard object tracking technique [5] to sense the presence of other projected characters, and extract visual features from the environment as part of a game. The algorithm is lightweight, enabling operation in a limited resource environment, without a great decrease in usability. Extending mobile phones to externalize display information in the local environment and respond to other players creates new possibilities for mixed reality interactions that are relatively unexplored. We focus in particular on the social scenarios of acknowledgment, relating, and exchange in games enabled between two co-located users. This paper describes the design of the system, implementation details, and our initial usage scenarios.

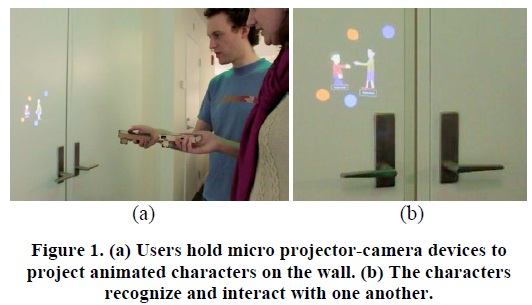

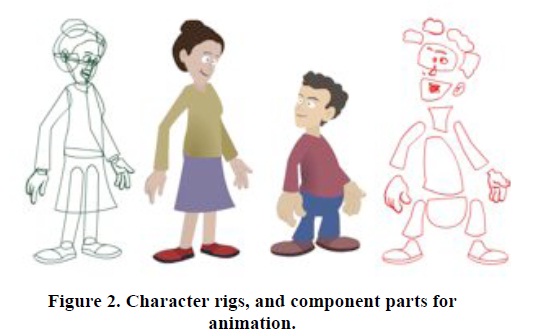

PoCoMo is a compact mobile projector-camera system, built on a commercially available cellular smartphone that includes a pico-projector and video camera. In the current prototype, the projector and camera are aligned in the same field of view via a plastic encasing and a small mirror (see Figure 1). The setup enables the program to track both the projected image and a portion of the surrounding surface. All algorithms are executed locally on the phones. The characters are programmed to have component parts (see Figure 2) with separate articulation and trigger different sequences based on the proximity of the other characters in order to appear to be life-like in the environment. We have designed characters for a number of scenarios to demonstrate how such a system may be used to for playful collaborative experiences:

The projected characters can respond to the presence and orientation of one another and express their interest in interaction. Initially they acknowledge each other by turning to face one another and smiling.

When characters have acknowledged each other, a slow approach by one character to the proximity to another can trigger a gesture of friendship such as waving (medium distance) or shaking hands (close proximity).

When characters are moved through the environment they begin a walk cycle, which is correspondent the speed of change of position. If the characters are within view of each other, their cycles approximately match. The synchronized steps help create the feeling of a shared environment, and provide feedback about proximity.

Characters can find and leave presents to one another at distinct features in the environment. We provide this as an example of collaborative interactions that can extend into multiple sessions and relate to specific physical locations.

In the initial sensing stage, the projected character displays an interested look: The eyes follow the possible location of the other character. The presence of another character is determined once the two markers were observed in the scene for over 20 frames in the duration of the last 40 frames. Once the presence of another character in view is the operated character will face the friend character. If the characters linger for 80-100 frames they will wave in the direction of the other. If the characters are aligned they will attempt to do a handshake. Alignment is considered sufficient if the center point of the friend character projection is within a normalized range.

The handshake is a key-frame animation of 10 frames, with 10 frame transitions from a standing pose. In order to synchronize the animation the initiating characters displays a third colored marker for a small period of time, to index the key frame timing. The second character reads the signal, and initializes their sequence as well. The handshake sequences of the characters are designed to have equal length animations for interoperability.

The characters can also leave a present on a certain object for the other character to find. This is done when the character is placed near an object, without movement, for over 3 seconds. An animation starts, showing the character leaning to leave a box on the ground, and the user is prompted for an object to leave: an image or any kind of file on the phone. The system extracts SURF descriptors of key-points on the object in the scene, and sends the data along with the batch of descriptors to a central server. The present location is recorded relative to strong features like corners and high contrast edges. When a character is in present-seeking mode, it tries to look for features in the scene that match those in the server. If a feature match is strongly correlated, the present will begin to fade in. We assume that users will leave presents in places they have previously interacted, to provide a context for searching for any virtual items in the scene.

Our system is responsive to the phone’s motion sensing capabilities, an accelerometer and gyroscope. The characters can begin a walk sequence animation when the device is detected to move in a certain direction at a constant rate. The current accelerometer technology used in our prototype only gives out a derivative motion reading, i.e. on the beginning and end of the motion itself and not during. Therefore we use two cues: Start of Motion and End of Motion, which trigger the starting and halting of the walk sequence. The system also responds to shaking gestures, which breaks the current operation of the character.

We chose to use the Samsung Halo projector-phone as the platform for our system. This Android-OS system has a versatile development environment. The tracking and detection algorithms were implemented in C++ and compiled to a native library and the UI of the application was implemented in Java using Android API. We used the OpenCV library to implement the computer vision algorithms.

To align the camera with the projection, we designed a casing with a mirror that fits on the device. Figure 4 shows the development stages in the design of the prototype. First, out prototype was attached to the device, and then we transitioned into full-device casing to support handling the phone. Finally, we designed a rounded case made from a more robust plastic to protect the mirror and allow it to be decoupled from the phone.

In future systems we plan to make the detection more robust and integrate markers with the projected content. We also plan to develop animations that correlate to specific user profiles and migrate the application to devices with wider fields of views for the projector and camera. In addition, we will conduct a user study to assess the fluidity of the interaction and improve the scenarios.

We present PoCoMo, a system for collaborative mobile interactions with projected characters. PoCoMo is an integrated mobile platform independent of external devices and physical markers. The proposed methods do not require any setup and can be played in any location. Our system allows users to interact together in the same physical environment with animated mixed-reality characters. The use of projectors for multiplayer gaming affords more natural and spontaneous shared experiences. Closely related systems support utilitarian tasks such as sharing [3] or inventory management [10]. We explore novel social interactions enabled by mobile projectors and hope developers will contribute other scenarios and games within the domain of collaborative mobile projection.

1. Blasko, G., Feiner, S., and Coriand, F. Exploring interaction with a simulated wrist-worn projection display. Proceedings of the Ninth IEEE International Symposium on Wearable Computers, (2005), 2-9.

2. Cao, X., Forlines, C., and Balakrishnan, R. Multiuser interaction using handheld projectors. Proceedings of the 20th annual ACM symposium on User interface software and technology, ACM (2007), 43–52.

3. Cao, X., Forlines, C., and Balakrishnan, R. Multiuser interaction using handheld projectors. Proceedings of the 20th annual ACM symposium on User interface software and technology, ACM (2007), 43–52.

4. Cootes, T.F., Edwards, G.J., and Taylor, C.J. Active appearance models. IEEE Transactions on Pattern Analysis and Machine Intelligence 23, 6 (2001), 681-685.

5. Fieguth, P. and Terzopoulos, D. Color-based tracking of heads and other mobile objects at video frame rates. Proceedings of IEEE Computer Society Conference on Computer Vision and Pattern Recognition, , 21-27.

| Are you interested in this topic.Then mail to us immediately to get the full report.

email :- contactv2@gmail.com |