Updated on May 29, 2026

Lip segmentation is an essential stage in many multimedia systems such as video-conferencing, lip reading, or low bit rate coding communication systems. It is also useful in various image / video acquisition devices encouraged the development of many computer vision applications, such as vision-based surveillance, vision based man machine interfaces, vision-based biometrics, and so on.

Hence extraction of lip becomes a broad field. This paper presents an algorithm for extraction of lip contour. We have used two algorithms for this. Our first algorithm is a color based algorithm derived from a quadratic polynomial, with the assumption that color of lips should lie in the range of blue. The accuracy of the first proposed algorithm is 91% in case of normal skin people, and 95% in case of fairer skin color people. Our second algorithm is model a based algorithm which gives accuracy up to 96%.

Detection of the lip contour is a fundamental procedure of mouth feature extraction. It forms a preliminary stage of face image analysis, which is essential for numerous application areas including man-machine interaction based on the observed human behavior, video-telephony, face and person identification, bimodal speech recognition, face and visual speech synthesis, facial expressions classification etc. All of these applications require an efficient and fully automated mouth feature extraction method that can be achieved using an automatic lip contour detection technique. Observing the pixels of lips, we find that lip color of fairer skin people ranges from dark red to purple, and for normal skin color people it is in the range of blue under normal light conditions.

From the perspective of human visual perception, the lips are very easy to be differentiated from the face, cause of different contrasts in colors. The outer labial contour of the mouth is having very poor color distinction when compared against its skin background; it makes extraction of lip a difficult problem. In order to improve the contrast between lip and the other face regions we need different type of lip extraction techniques. But if we are taking the assumption that lip color varies from dark red to purple then this condition is satisfied with the people who have very fair complexion.

For the normal skin people we are assuming that the color of lip also varies in the range of blue. We thus used the chromatic color space (Cheng Chin et al, 2003), which is constructed from the RGB color space (Cheng Chin et al, 2003), to exclude dark pixels because chromatic color space is not suitable to distinguish between bright pixels and dark pixels. For chromatic color space, each pixel is represented by two values, denoted by (r,g). Their relationship is explained in section IV-A. Both dark pixels and bright pixels might have the same converted r and g values due to the normalization effect in brightness in chromatic color space thus we have used both the color spaces. Our second algorithm is model based algorithm (T.F.Cootes et al, 1995) hence we don’t need any color constrain for that.

For testing both the methods we have chosen different databases. If a person is having very fair complexion then we have applied dark red to purple color on his lips, if he is having normal skin color then we choose blue color. A dataset of 150 persons for fairer skin color people with red color on lips is taken from internet for testing results. For normal skin color people we construct the database by applying blue color on lips, we are having 120 person’s face.

Two types of pictures are taken in both the cases which are enlisted below.

Images when a part of face with lips is available

Images when whole face is available We have tested all the listed type of images for first algorithm.

And the second algorithm is tested on the images when a part of face with lips is available.

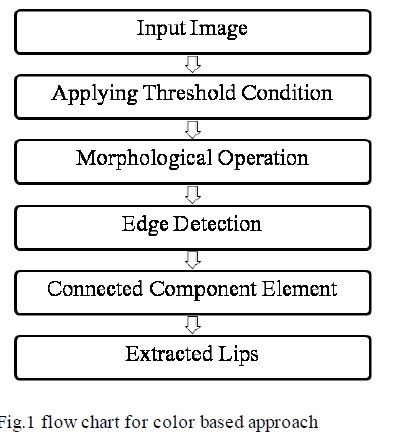

The overview of the first proposed method for extracting the lip contour from face is shown in Figure 1

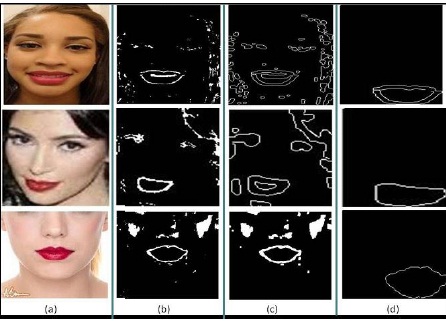

To extract the upper and lower contour of lips which are mostly diagonal edges we apply edge detection technique using Sobel edge detector as it smoothes the image in the direction perpendicular to edge detection which makes it a good edge detector for diagonal edges.

As we know that the largest connected component in the face will be lips, we have applied the 8-connected component algorithm to identify lips. Figure 3 shows an instance of the above steps for normal skin color people and Figure 2 shows the result for fairer skin people.

This algorithm is designed for specific set of frontal face images with open mouth, from the whole database. This model based algorithm uses Mean Shape Model (MSM). The database used for training is hand designed. The algorithm consists of four major steps: (a) Training the data set, (b) calculate MSM, (c) normalize the MSM by aligning it with the min angle to principal axis, (d) MSM deformation.

The training set contains any set of 20 open mouth images to make MSM. Open mouthed images are selected to cater for the purpose of maximum outer lip contour segmentation of any input image in any size database. Thus for this experiment, the training set contains only open mouth images to make the large shape model which covers up all other type of movement of lips by just inner deformation of the model.

Algorithm 1 is tested on 270 images. 150 images of fairer skin color people with dark red to purple color lips, and 120 images of normal skin color people where, the test images contains both full face and part of face. The accuracy of the proposed algorithm is 91% for the normal skin, and 95% for the fairer skin people, where the test images contains full face, as 142 images among 150 gives correct result for fairer skin color people. And 110 among 120 gives correct result for normal skin color people. Algorithm 2 is tested on 50 images of normal skin people database containing frontal face images having mouth only. Out of 50 images, 48 images successfully fulfilled the criteria for the acceptance of lip contour. Figure 5 shows the results in detail. Both the algorithms work well even for low-resolution images, but performance degrades with poor illumination.

Proposed algorithm 1 gives good results for both skin color people, and works in different well-constrained conditions of database. It has an accuracy of 91% for normal skin color and 95% for fair skin color. The accuracy is consistent across databases provided the lip color is red and image quality is good. The performance degrades if the lip color is neutral and any other face feature is similar to lip color. This is proposed as future enhancement of the algorithm. In algorithm 2 the lip contour segmentation is performed by using MSM deformation approach which gives good results for outer landmarks. This work can be further extended for inner landmark points of the mouth, which defines the position of mouth. This could also identify how much lips are open which is highly required in the visual speech recognition systems. Both the algorithms work well for good quality images. Small degradation is observed in poorly illuminated images.

L.Yuille, D.S.Kohen, and P.W.Hallinan (1989), Feature Extraction from Faces Using Deformable Templates, In: Proceedinds of IEEE International Conference Computer Vision and Pattern Recog., pp. 104-109, (San Diego,CA.

J.Luettin, N.A.Thacker, and S.W.Beet (1996), Visual Speech Recognition Using Active Shape Models and Hidden Markov Models, In Proc. IEEE Intl. Conf. on Acoustics, Speech, and Signal. Vol. 2, pp. 817820, Atlanta, GA.

M.Kass, A.Witkin, and D.Terzopulos (1987), Snakes: Active Contour Models. In Intl. J. Computer Vision, Vol. 1, No. 4, pp. 321331.

| Are you interested in this topic.Then mail to us immediately to get the full report.

email :- contactv2@gmail.com |