Updated on May 29, 2026

The Eye Directive wheelchair is a mobility-aided device for persons with moderate/severe physical disabilities or chronic diseases as well as for the elderly. There are various interfaces for wheelchair available in the market, still they remain under-utilized, the reason being the ability, power and mind presence required to operate them. The proposed model is a possible alternative. In this model, we use the optical-type eye tracking system to control powered wheel chair. User’s eye movements are translated to screen position using the optical type eye tracking system, without any direct contact.

When user looks at appropriate angle, then computer input system will send command to the software based on the angle of rotation of pupil i.e., when user moves his eyes balls left (move left), right (move right), straight (move forward) in all other cases wheel chair will stop.

Also, obstacle detection sensors are connected to the arduino to provide necessary feedback for proper operation of the wheelchair and to ensure the user’s safety. The motors attached to the wheelchair support differential steering which avoids clumsy motion. The wheelchair has also been provided with a joystick control to ensure safe movement in case of tired vision and with a safety stop button, which will enable the user to stop the wheelchair at his own ease.

The wheel chair model design illustrated here is a well-equipped and flexible motorized wheelchair for paralytic and motor disabled patients to drive the wheelchair without straining any of their physical posture. The gaze movement is tracked autonomously and the wheelchair is directed according to the eye position. It is an eco-friendly and cost-effective wheelchair that dissipates less power and can be fabricated using minimum resources. System has been designed taking into consideration the physical disability, thus it won’t affect the patient physically. Obstacle and ground clearance sensing is performed to ensure patient’s safety. Audible notification for the obstacles has been provided. Alternatively a joystick has been embedded for the control of wheelchair.

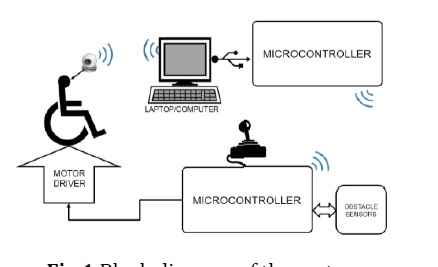

In Image Capturing Module, images are captured using wireless camera and are sent to the base station (computer/ laptop) for further processing. In Microprocessor Interfacing, the generated electric digital output from the base station is used to direct the motors of the wheelchair. Microprocessor also takes care of the obstacles and the user inputs. The system’s functional block diagram is illustrated in fig.1

Wireless camera: Eye of the user is captured with a pin hole wireless camera which transmits the images to the base station wirelessly. Computer Base station: The images received from the camera are processed using Open source Computer Vision library and the gaze movement is sent to the chair via X-Bee communication. Microcontroller: They are used to maintain wireless communication protocols and on the receiver side, it also takes care of obstacles and manual user inputs. Motor Driver: They provide the high current required to drive the motors.

The images are captures using a Pin-hole wireless camera illustrated in fig.2. It offers effective surveillance protection. It has an operative range of 150 feet, providing full motion, real-time, color video with no delay

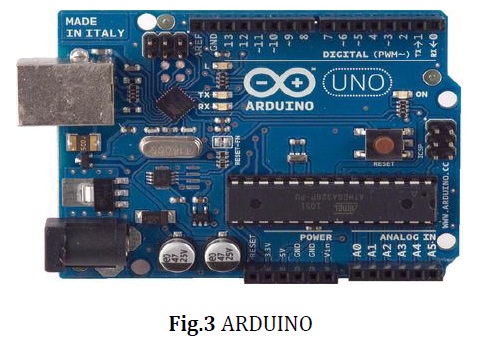

The microcontroller used in this model is Arduino, shown in fig.3. Arduino is a single-board microcontroller, intended to make building interactive objects or environments more accessible. The hardware consists of an open-source hardware board designed around an 8-bit Atmel AVR microcontroller, or a 32-bit Atmel ARM.

The system uses two microcontrollers. The Transmitting Microcontroller is connected to the processing unit. This microcontroller converts the information received from the processing unit into signals and transmits them wirelessly over to the receiving microcontroller attached to the wheelchair. The Receiving Microcontroller receives signals from the transmitting microcontroller wirelessly and accordingly initiates the movement in the required direction. This microcontroller is mounted on the wheelchair and is connected to the motor driver. It is also connected to the object sensors, joystick control and the emergency stop button. This microcontroller can start the motion, change the direction and even stop the system on receiving commands from the above mentioned attachments.

The wheelchair has been mounted with four ultrasonic sensors to avoid collision and damage to the user. The three sensors monitor the forward, left and right directions. Ultrasonic sensors use electrical-mechanical energy transformation to measure distance from the sensor to the target object. The arduino rings the buzzer if any obstacle is detected within the range of 100-230 cm from the wheelchair, so that the obstacle can clear the way and ensures safe passage for the wheelchair. But if the obstacle still prevails within the 30cm range from the wheelchair, then the arduino sends stop command to the motor driver, ensuring the system comes to a halt. The fourth sensor is used for ground clearance. Ground clearance measures the height between the sensor and the flat surface (ground). The arduino will send stop command to the motor driver if there is a sudden step and/ slope.

The system uses lithium ion cells to supply power to the components mounted on the wheelchair. The battery contains 30 cells of Li-Ion having 3.7V 1.5AHr each. Battery is connected in 6x5 fashion i.e. 5 sets of batteries having 6 cells in series are connected in parallel. Hence battery gives overall 22.2V output with 7.5AHr capacity. A manual wheelchair is modified and the motors are attached for user’s independence and encourage them to move from one place to another, without taking any help from others. The original wheels having ball bearing are removed and a coupling of MS material is designed which can accommodate the motors shaft in it and which also fits into inner diameter of the wheel. The hole on the coupling is matched with the wheel and the shaft is also locked to avoid slipping.

The series of images taken by the camera is transmitted to the base station (computer/ laptop). The images are processed using Open Source Computer Vision Library (OpenCV), where they are converted into .xml file. OpenCV processing yields the length and width of the detected object(pupil). The length and width of each quadrant is prescribed in the OpenCV algorithm. The position helps to calibrate the quadrant in which the pupil lies, which helps us to find the direction in which the eye is pointing. The processing basically divides the image in three quadrants (left, right and center). If position of the pupil lies in the right quadrant then the wheelchair moves left. If it lies in the left quadrant, wheelchair moves right. If the object lies in the center the wheelchair moves straight.

The wheelchair can operate either in the eye (image) directed mode or joystick mode. The modes can be switched by long-pressing the joystick button.

Eye directed mode: The user’s eye movement forms the basis of the entire system. The movement of the eyeballs is continuously tracked using a wireless camera. This camera is mounted in front the eye, such that the focus remains on the eye movement. These images are sent to a processing unit i.e. a computer or laptop. Every single image undergoes processing and the required information is generated from the image. The processing unit has an USB outlet to an Arduino. The information of the eyeball movement is sent to the transmitting arduino connected to the computer. The transmitting arduino then transmits the information wirelessly to the receiving arduino which is mounted on the wheelchair. The receiving arduino on the wheelchair is connected to the motor driver.

On receiving appropriated command, the receiving arduino directs the motion in the required direction. The motors exhibit differential steering mechanism ensuring swift turning. The system has been enabled with four ultrasonic sensors which will help avoid collision in the left, right and forward direction respectively. The fourth sensor has been provided for ground clearance.

Joystick mode: Joystick mechanism has also been provided as an additional feature to ensure movement in case of tired vision. A stop button is provided on the wheelchair which will cease the working at the very instance it is pressed.

The system functions with an accuracy rate of 70-90 %. The aim of this project is to contribute to the society in our small way by setting out an idea for a system which could actually better the lives of millions of people across the globe. Direction in which pupil looks is decided by fixing range to the particular direction as user looks. Detection of pupil is done even on illumination unless the illumination is covering whole eye, this is because when the light hits the pupil and illumination spreads on the pupil covering whole pupil which ignores those pixels so as we treat the illumination spots it will leave behind a maximum change edges that cannot be determined and the operator will consider another position to be a iris location. This process works even if image taken in little dark environment.

K. T. V. Grattan, A. W. Palmer, and S. R. Sorrell (October 1986), Communication by Eye Closure-A Microcomputer-Based System for the Disabled‘, IEEE Transactions on Biomedical Engineering, Vol. BME-33, No. 10.

Q.X. Nguyen and S. Jo (21st June 2012.)Electric wheelchair control using head pose free eye-gaze tracker, Electronics Letters, Vol. 48 No. 13

Rory A. Cooper (July 1999) Intelligent Control of Power Wheelchairs‘, IEEE Engineering in medicine and Biology, 0739-51 75/95,

| Are you interested in this topic.Then mail to us immediately to get the full report.

email :- contactv2@gmail.com |