Updated on Mar 21, 2026

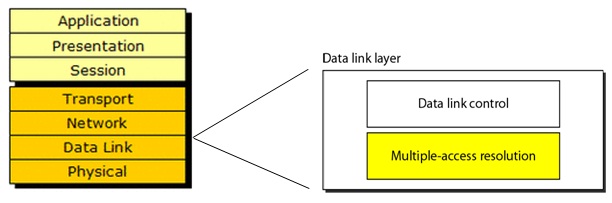

The media access control (MAC) data communication protocol sub-layer, also known as the medium access control, is a sublayer of the data link layer specified in the seven-layer OSI model (layer 2).

It provides addressing and channel access control mechanisms that make it possible for several terminals or network nodes to communicate within a multiple access network that incorporates a shared medium, e.g. Ethernet. The hardware that implements the MAC is referred to as a medium access controller. The MAC sub-layer acts as an interface between the logical link control (LLC) sublayer and the network's physical layer. The MAC layer emulates a full-duplex logical communication channel in a multi-point network. This channel may provide unicast, multicast or broadcast communication service.

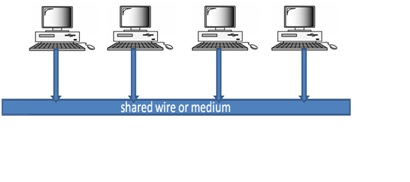

The channel access control mechanisms provided by the MAC layer Is known as a multiple access protocol. This makes it possible for several stations connected to the same physical medium to share it. Examples of shared physical media are bus networks, ring networks, hub networks, wireless networks and half-duplex point-to-point links. The multiple access protocol may detect or avoid data packet collisions if a packet mode contention based channel access method is used, or reserve resources to establish a logical channel if a circuit switched or channelization based channel access method is used. The channel access control mechanism relies on a physical layer multiplex scheme.

Broadcast networks have a single communication channel that is shared by all the machines on the network. A packet sent by one computer is received by all the other computers on the network. The packets that are sent contain the address of the receiving computer; each computer checks this field to see if it matches its own address. If it does not then it is usually ignored; if it does then it is read. Broadcast channels are sometimes known as multi-access channel.

• WHO is going to use the channel ?

• WHEN the channel is going to be used ?

• For HOW much time the channel is used ?

Due to shared channel and unregulated traffic over the network collisions and data loss occur. Some protocol must be followed for regulated and safe transmission over the network.

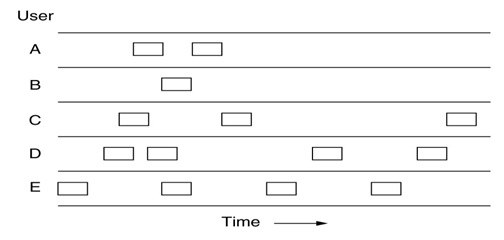

In a random access protocol, a transmitting node always transmits at the full rate of the channel, namely ,R bps .When there is a collision ,each node involved in the collision repeatedly retransmits its frame( that is ,packet) until the frame gets through without a collision. But when a node experiences a collision ,it doesn’t necessarily retransmitting the frame right away. Instead it waits a random delay before retransmitting the frame .Each node involved in a collision chooses independent random delays .Because the random delays are independently chosen , it is possible that one of the nodes will pick a delay that is sufficiently less than the delays of the other colliding nodes and will therefore be able to sneak its frame into the channel without a collision.

There are dozens of random access protocols in this we’ll describe a few of the most commonly used random access protocols – the ALOHA protocol , CSMA (carrier sense multiple access ) protocol , CSMA/CD (carrier sense multiple access /collision detection) protocol and CSMA/CA (carrier sense multiple access winth collision avoidance )protocol

The original version of ALOHA used two distinct frequencies in a hub/star configuration, with the hub machine broadcasting packets to everyone on the "outbound" channel, and the various client machines sending data packets to the hub on the "inbound" channel. If data was received correctly at the hub, a short acknowledgment packet was sent to the client; if an acknowledgment was not received by a client machine after a short wait time, it would automatically retransmit the data packet after waiting a randomly selected time interval. This acknowledgment mechanism was used to detect and correct for "collisions" created when two client machines both attempted to send a packet at the same time.

ALOHAnet's primary importance was its use of a shared medium for client transmissions. Unlike the ARPANET where each node could only talk directly to a node at the other end of a wire or satellite circuit, in ALOHAnet all client nodes communicated with the hub on the same frequency. This meant that some sort of mechanism was needed to control who could talk at what time. The ALOHAnet solution was to allow each client to send its data without controlling when it was sent, with an acknowledgment/retransmission scheme used to deal with collisions. This became known as a pure ALOHA or random-accessed channel, and was the basis for subsequent Ethernet development and later Wi-Fi networks. Various versions of the ALOHA protocol (such as Pure ALOHA, Slotted ALOHA) also appeared later in satellite communications, and were used in wireless data networks such as ARDIS, GSM.

To assess Pure ALOHA, we need to predict its throughput, the rate of (successful) transmission of frames. First, let's make a few simplifying assumptions:

• All frames have the same length.

• Stations cannot generate a frame while transmitting or trying to transmit.

• The population of stations attempts to transmit (both new frames and old frames that collided) according to a Poisson distribution.

Let "T" refer to the time needed to transmit one frame on the channel, and let's define "frame-time" as a unit of time equal to T. Let "G" refer to the mean used in the Poisson distribution over transmission-attempt amounts: that is, on average, there are G transmission-attempts per frame-time.

| Are you interested in this topic.Then mail to us immediately to get the full report.

email :- contactv2@gmail.com |