Updated on May 29, 2026

Smart glasses are wearable devices that display real-time information directly in front of users’ field of vision by using Augmented Reality (AR) techniques. Generally, they can also perform more complex tasks, run some applications, and support Internet connectivity.

This paper provides an overview of some methods that can be adopted to allow gesture-based interaction with smart glasses, as well as of some interaction design considerations. Additionally, it discusses some social effects induced by a wide-spread deployment of smart glasses as well as possible privacy concerns.

Head-worn displays (HWD) have recently gained significant attention, in particular thanks to the release of a temporary version of Google Glass. Moreover, the anticipation of the commercial launch of Google Glass1 in the upcoming months and the fresh news that Facebook, Inc. acquired Oculus Rift2 increased the popularity of such devices even further. The trend of wearable device purchases is importantly growing and some business analysts forecast more than 20 million annual sales of Google Glass in 2018. Furthermore, researchers have been already studying and investigating HWD for several years.

As a consequence, it is important to give an overview of different methods that could be used to interact with smart glasses and, above all, analysing privacy concerns and identifying the current and potential social implications related to these devices has a great significance at this point.

The main purpose of smart glasses is to provide users with information and services relevant for their contexts and useful for the users to perform their tasks; in other words, such devices augment users’ senses. In addition, they allow users to do basic operations available on today common mobile devices such as reading, writing e-mails, writing text messages, making notes, and answering calls. Therefore, although most of the usage of smart glasses is passive for the users, i.e. reading content on the little screen of the device, active interaction with such devices is fundamental to control them and supply inputs. In fact, users need ways to ask smart glasses for instance to open a particular application, answer something they need to know, insert content for emails, messages or input fields, or to control games.

Before presenting how users can interact with smart glasses, it is worth mentioning the main aspects that have to be taken into account during the design process of such techniques. As for technical characteristics, a gesture recognition system for HWD should ideally be very accurate, i.e. able to distinguish fine shapebased gestures, insensitive to daylight, as small as possible, consume low power, and be robust in noisy and cluttered environment that are typical conditions in everyday life scenarios. As far as user experience is concerned, the physical effort required to users to interact with the devices is relevant, as well as easiness of use and encumbrance of the device.

Two different categories of interaction methods can be distinguished for smart glasses: free form and others. The former includes for example eye tracking, wink detection, voice commands, and gestures performed with fingers or hands. On the other hand, the latter comprises for instance the use of hand-held devices, e.g. point-and-click controllers, joysticks, one-hand keyboards and smartphones, or smart watches to control the HWD. The aforementioned examples should help to understand the difference between the two kinds of interaction. However, it can be expressed by saying that the free form does not require any extra device other than the smart glass to be performed and detected; on the contrary, it is obvious how the control of the smart glass that happens via smartphones, pointer, etc. cannot satisfy the free form criterion.

Thereinafter, we will only focus on gesture-basted interaction, because it is preferable to assure a great user experience. Gesture-based interaction has been researched more than eye tracking and wink detection so far, and voice recognition has already reached a huge diffusion on today mobile devices. It is relevant to note that several different techniques to detect gestures exist; analysing them in detail would lead us to stray from the purpose of this paper. Some of them make use of devices and sensors that have to be tied to users’ body, e.g. to wrists, hands or fingers, whereas others exploit cameras that are external to the smart glass itself and are located around the user.

In addition, some of these methods are used together with markers, e.g. reflective, infrared, coloured, in order to identify the position of users’ hands. Alternatively, gestures can be recognized by using cameras or sensors, such as RGB or 3D cameras and depth sensors, that can be embedded into smart glasses. It is essential to stress that real free forms of interaction are realized only by using cameras or sensors embedded into the HWD as they nullify the need of external components and reduce the encumbrance. As a consequence, these approaches are ideal for the commercial version of the smart glasses that will be launched on the market. Differently, the other recognition techniques that have been classified as non-free forms are usually used in research for several studies in this context.

Another significant distinction applicable to gestures is based on where the gesture is performed. A common solution is doing gestures very close to or directly on some surfaces such as some parts of the smart glass itself or the user’s body. The following paragraphs present two different studies that have investigated this approach.

Serrano et al. describe their study aimed at identifying which gestures and surfaces allow fast interaction with as little effort as possible. As regards the surface, they considered and compared the results about some parts of both the smart glass and the user’s face. In particular, the face has been chosen since it is a part of the body we interact with very often, i.e. 15.7 times/hour in a working set; consequently, this means that gestures performed on some parts of the face could be less intrusive than others and may reach a good level of acceptance. On the other hand, the high frequency of hand-face contacts leads to the need of gesture delimiters used by the users to inform the system that a new gesture is starting and avoid unintentional triggers; for instance, voice commands or long pressing on the surface can be used to invoke new gestures.

The need to find an alternative to performing gestures directly on the smart glass itself arises from the fact that the touchable area available for interaction on such devices is usually only a narrow strip in correspondence to the user’s temple; in fact, this means that many gestures are needed to browse some pages or applications that require a lot of panning and zooming due to the very small size of the interaction surface. As a result, the time taken to reach a target in a page is negatively affected as well as the armshoulder fatigue is significant because of the prolonged lifting.

As far as the performances are concerned, figure 2 illustrates that cheek obtained better results in terms of both time taken and exertion required to perform the interaction. In addition, using the cheek took the participants roughly 20 seconds to perform three different zooming and they considered it the easiest method compared to the over-sized temple and regular temple. The mean Borg referenced in the plot is a scale for ratings of perceived exertion; in particular, it allows to estimated fatigue and breathlessness during physical work . The last result that is presented about this study concerns the social acceptance of these gestures and showed that participants still prefer interacting with the smart glass over using their face. In particular, this result is not a consequence of only appearance matters, however it is due to some other relevant aspects, e.g. hygienic issues, damage to make-up, meaning of some gestures in other ethnic groups, etc.

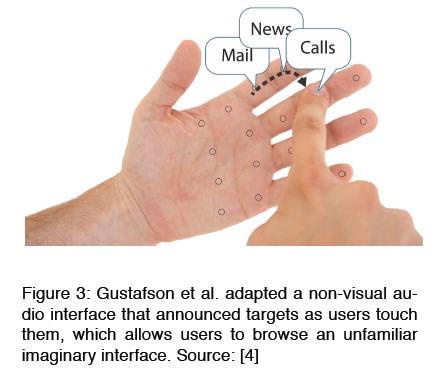

In conclusion, the study shows that cheek is a valid alternative to the touchable areas on smart glasses as for performances; though, social approval is very important and may influence users even more than interaction speed and fatigue. Palm-based imaginary interfaces. The purpose of this second study is to identify and quantify the role of visual and tactile cues when browsing imaginary interfaces [4]. Specifically, imaginary interfaces can be defined as spatial and non-visual interfaces for mobile devices and, in this particular case, they consist of matching between some application to be opened or actions to be triggered and some parts of the user’s hand (see figure 3). This approach is very useful to impaired users and eye-free interaction with smart glasses and, above all, is less intrusive and tiring than hand-to-face and hand-to-HWD interaction.

The purpose of this second study is to identify and quantify the role of visual and tactile cues when browsing imaginary interfaces . Specifically, imaginary interfaces can be defined as spatial and non-visual interfaces for mobile devices and, in this particular case, they consist of matching between some application to be opened or actions to be triggered and some parts of the user’s hand (see figure 3). This approach is very useful to impaired users and eye-free interaction with smart glasses and, above all, is less intrusive and tiring than hand-to-face and hand-to-HWD interaction.

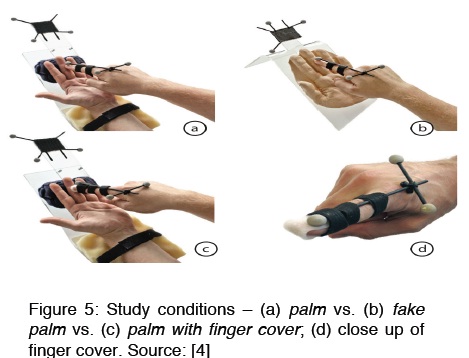

Figure 4 shows the four study conditions investigated in this study. The study shows that when not blindfolded (Figure 4a), the grid drawn on the fake phone (Figure 4c) orients users on the screen and helps them to find the targets that were reached faster than on the palm (Figure 4d). Additionally, this experiment proved that, in contrast, touching the palm was faster than touching the fake phone when blindfolded; this has a great importance because it demonstrates that the tactile cues received by the users touching their own palms is very significant. After having found that the tactile cues are relevant, it is interesting to understand how the two different tactile senses, i.e. active and passive, contribute to help users to browse the interface. In particular, the active sense is perceived by the finger that actively touches the palm, whereas the passive one is sensed by the palm when touched by the finger.

Therefore, a second experiment has been conducted as a 3x2 factorial design where the measured variable and onefactor, i.e. user condition sighted vs. blindfolded, remained the ones of the first experiment; as for the other factor, i.e. the touching surface, the touch on the palm with a cover finger was tested in addition to the touch on both the real and fake palm with the bare finger. The results were meaningful since they showed that browsing on the fake palm is much slower than on the real palm, while in contrast there is no significant difference between touching the real palm with a cover or with a bare one. As a consequence, this certainly means that the majority of tactile cues come from the passive tactile sense rather than the active one, contrary to what authors’ expected.

In order to conclude the second section and the overview in general, it is interesting to observe that the ethnical group plays a fundamental role when social implications and the privacy concept are considered. In contrast to the data that have been presented at the beginning of this section about openness to buy and wear Google Glass and acceptance in USA, Indian people are really enthusiastic about smart glasses and their potential and not worried about privacy concerns at all; this may be a consequence of the really high Indian human density and shows how people do not care about being captured/recorded within a huge crowd, probably because identifying and tracking a single individual is very difficult.

Moreover, worries about privacy and social implications depend also on users’ age; it was really surprising to read about two grandparents who tried Google Glass and were very excited and satisfied about it. They even proposed interesting applications like medicine consumption tracking and livestreaming to get advised while gardening; they also said that they would like to receive such device and use it everyday.

To sum up, what emerges from this overview is that the biggest issues related to smart glasses are well-known: similar matters were raised when, for instance, camera phones became popular [19], as well as when phones started to identify users’ locations; though, both these features are today commonly used in ordinary life and almost no one renounced to use mobile devices because of these functionalities. In fact, expectations of privacy change after devices are used for a while: users only need to get used and then, once they realize they feel safer and empowered, they do not concern about privacy matters anymore.

Research Done By Marica Bertarini ,ETH Zurich , Department of Computer Science Zurich, Switzerland

1. Statista. Google Glass annual sales forecast from 2014 to 2018. 2 May 2014 https://www.statista.com/statistics/259348/google-glassannual- sales-forecast/

2. Colaco, A., Kirmani, A., Hyang, H. S., Gong, N., Schmandt, C., Goyal, V. K. Mime: Compact, low power 3D gesture sensing for interaction with head mounted displays. UIST 2013: 227-236

3. Electronic Privacy Information Center, Google Glass and privacy. 2 May 2014 https://epic.org/privacy/google/glass/default.html

4. Kim, L. Google Glass delivers new insight during surgery. 2 May 2014 https://www.ucsf.edu/news/2013/10/109526/surgeonimproves- safety-efficiency-operating-room-googleglass

5. Camera phones threat to privacy. 2 May 2014 https://news.bbc.co.uk/2/hi/technology/4017225.stm

6. https://www.google.com/glass/start/

7. https://www.oculusvr.com/

| Are you interested in this topic.Then mail to us immediately to get the full report.

email :- contactv2@gmail.com |