Updated on Mar 21, 2026

Integrating a high-level 3D modeling interface in free-hand sketching reduces the human cognitive load in 3D creations.Modern CAD based interfaces inhibit interactions with low level commands and thereby creating a psychological gap

.Here we intend to develop Isphere with 24 degrees of freedom to bypass the mental load of low-level commands.Isphere is an intuitive device embedded with 12 capacitive sensors which enables object design using Top-down approach.The interface uses simple Push and Pull commands to interact with the user.We believe that Isphere can save lot of time by bypassing traditional Mice and keyboard based mod- eling.

Experimental results indicate that novices in 3D modeling learn faster with the help of Isphere.We claim that Isphere is designed to mini- mize the barrier between the humans cognitive model of what they want to accomplish and the computer's understanding of the user's task.As Isphere lacks reliability in its input mechanisms, this paper suggest new improved algorithms for sensor control and new novel methods to create 3D models.

For designers, a quick method to demonstrate an idea or principle might be Free- hand sketching.A sketch expresses an idea directly and interactively.Freehand sketching is a common way to model 2D objects but for 3D modeling it will be complex and unintuitive to visualize.Recent developments in computer aided de- sign(CAD) helps to address the problem effectively.It involves series of low-level commands and mode-switching operations to model a 3D object.This introduces a well-known problem for novice to learn commands as it takes months to became an expert to create 3D models intuitively.

The aim of this research was to develop a high-level modeling system which can reduce human cognitive load in 3D creation.It creates a new dimension to interact with 3D models intuitively.It projects designers idea into 3D model instantly as Isphere will manipulate 3D objects in a spatial way.Simply, an input device that should make us to focus what is in our mind and built 3D models.As shown in the Fig. 1, isphere models an object through spatial method.

The hypothesis tested by conducting a study to compare the performance between command-based interfaces and Isphere.We claim that our approach to develop new 3D input interface is better than other research works conducted as it is a novel way to have '3D input interface'.

Hardware gives a vivid picture of Isphere as it essentially captures all human hand action.Constructing a right shape for Isphere was a challenge.Nevertheless, We built a foldable dodecahedron with acrylic material which makes a Pentago- nal structure.Every face is capable of sensing hands for eight degrees above the surface.Capacitive sensors measures physical actions which are connected on the Isphere.Shunt mode operation is used to detect motions.Brie y, Shunt mode ca- pacitive sensing will work between a transmit and receive electrode.For Isphere, the hand movement of the performer changes the electric field between a trans- mit and receive electrode.Hence the current is measured at the second electrode correlates to the change in electrica field.Normally, the distance to the second electrode is known as Shunt mode[9](in our case less than six inches).Capacitive sensors detects the proximity of hands at twelve different directions which cor- responds to faces.

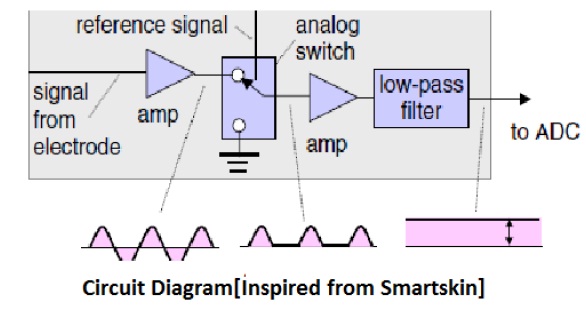

Fig. 6 shows that received signal from capacitive sensors are level adjusted by signal conditioning circuit (Amplifier,Switch and a Low-pass filter).Finally, digital input will be received by PIC microcontroller. A Microcontroller interfaces the incoming digital input to software module.

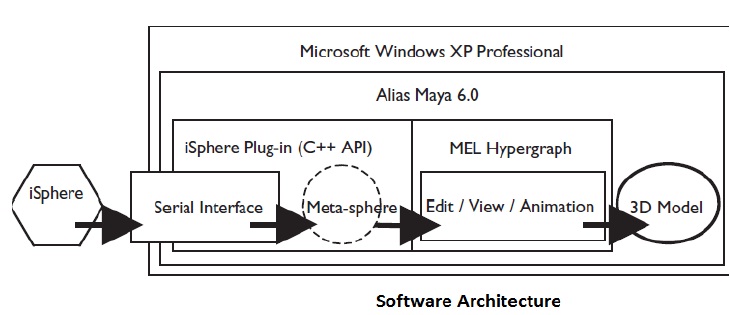

As shown in Fig. 7 Isphere hardware is connected to microcontroller through a Serial interface (RS232).Meta-sphere maps the input signals into a meta-sphere to a target 3D object.We use Alias-Wavefront Maya 6.0 C++ API (Applica- tion Programming Interface) as iSphere plug-in.3D manipulation is realized by MEL(Maya Embedded Language).MEL modifies the functions by drawing rela- tionships from data.The system architecture is exible for future upgrade.New functions can easily be added into the system. Currently, iSphere manipulates 3D mesh-based model in Alias-Wavefront Maya, 3DS Max or Rhino.

We found that developing high-level 3D modeling can reduce low-level manip- ulations as it is modeled intuitively.Experimental results prove that modeling 3D objects using Isphere eliminates gap between novice and experts.As claimed, the paper suggests that developing a free hand 3D input modeling interface is a novel development in 3D input hardware. Data shows that Isphere has capability to enhance 3D modeling experience using natural human hand gestures.It bridges 3D modeling environment from non-interactive to Interactive.Isphere leads a new paradigm for 3D designers from abstract commands to natural hand interaction.

The thought process becomes more intuitive and direct.Hence, learning becomes easier for novices to realize complex 3D objects. Although Isphere is intuitive and direct, it lacks fidelity when 3D objects need to modeled with minimal span of time.One possible solution to improve speed and accuracy will be by increas- ing the sensitivity of capacitive sensors.Yet, We need confirmatory studies in the area of sensor research.Readers may criticize that Isphere works for specialized modes.

Nevertheless, it is important to understand each methods performs better in certain mode it leads a new way to unanswered questions and future directions dealing with robust mapping into shapes and improving algorithms for sensing control. Our research implies that an intuitive free-hand modeling interface like Isphere presents an effective way to express ideas directly without any intense mental activities. It aims to improve the interactions between users and computers in- tuitively.Whole idea was designed to minimize the barrier between the humans cognitive model of what they want to accomplish and the computer's under- standing of the user's task.

• iSphere:A free-hand 3D modeling interface Chia-Hsun Jackie Lee,Yuchang Hu, and TedSelker

| Are you interested in this topic.Then mail to us immediately to get the full report.

email :- contactv2@gmail.com |