Published on Nov 30, 2023

Face detection is concerned with finding whether or not there are any faces in a given image (usually in gray scale) and, if present, return the image location and content of each face. This is the first step of any fully automatic system that analyzes the information contained in faces (e.g., identity, gender, expression, age, race and pose).

While earlier work dealt mainly with upright frontal faces, several systems have been developed that are able to detect faces fairly accurately with in-plane or out-of-plane rotations in real time. Although a face detection module is typically designed to deal with single images, its performance can be further improved if video stream is available.

Face detection is the first stage of an automatic face recognition system, since a face has to be located in the input image before it is recognized. A definition of face detection could be: given an image, detect all faces in it (if any) and locate their exact positions and size. Usually, face detection is a twostep procedure: first the whole image is examined to find regions that are identified as ―face‖. After the rough position and size of a face are estimated, a localization procedure follows which provides a more accurate estimation of the exact position and scale of the face. So while face detection is most concerned with roughly finding all the faces in large, complex images, which include many faces and much clutter, localization emphasizes spatial accuracy, usually achieved by accurate detection of facial features.

Face-detection algorithms focus on the detection of frontal human faces, whereas newer algorithms attempt to solve the more general and difficult problem of multi-view face detection. That is, the detection of faces that are either rotated along the axis from the face to the observer (in-plane rotation), or rotated along the vertical or left-right axis (out-of-plane rotation), or both. The newer algorithms take into account variations in the image or video by factors such as face appearance, lighting, and pose.

Many algorithms implement the face-detection task as a binary pattern-classification task. That is, the content of a given part of an image is transformed into features, after which a classifier trained on example faces decides whether that particular region of the image is a face, or not. Often, a window-sliding technique is employed. That is, the classifier is used to classify the (usually square or rectangular) portions of an image, at all locations and scales, as either faces or non-faces (background pattern). Images with a plain or a static background are easy to process. Remove the background and only the faces will be left, assuming the image only contains a frontal face. Using skin color to find face segments is a vulnerable technique. The database may not contain all the skin colors possible.

Lighting can also affect the results. Non-animate objects with the same color as skin can be picked up since the technique uses color segmentation. The advantages are the lack of restriction to orientation or size of faces and a good algorithm can handle complex backgrounds. Faces are usually moving in real-time videos. Calculating the moving area will get the face segment. However, other objects in the video can also be moving and would affect the results. A specific type of motion on faces is blinking. Detecting a blinking pattern in an image sequence can detect the presence of a face. Eyes usually blink together and symmetrically positioned, which eliminates similar motions in the video. Each image is subtracted from the previous image.

The difference image will show boundaries of moved pixels. If the eyes happen to be blinking, there will be a small boundary within the face. A face model can contain the appearance, shape, and motion of faces. There are several shapes of faces. Some common ones are oval, rectangle, round, square, heart, and triangle. Motions include, but not limited to, blinking, raised eyebrows, flared nostrils, wrinkled forehead, and opened mouth. The face models will not be able to represent any person making any expression, but the technique does result in an acceptable degree of accuracy. The models are passed over the image to find faces, however this technique works better with face tracking. Once the face is detected, the model is laid over the face and the system is able to track face movements. A method for human face detection from color videos or images is to combine various methods of detecting color, shape, and texture. First, use a skin color model to single out objects of that color. Next, use face models to eliminate false detections from the color models and to extract facial features such as eyes, nose, and mouth.

Face detection is used in biometrics, often as a part of (or together with) a facial recognition system. It is also used in video surveillance, human computer interface and image database management. Some recent digital cameras use face detection for autofocus. Face detection is also useful for selecting regions of interest in photo slideshows that use a pan-and-scale Ken Burns effect. Face detection is gaining the interest of marketers. A webcam can be integrated into a television and detect any face that walks by. The system then calculates the race, gender, and age range of the face. Once the information is collected, a series of advertisements can be played that is specific toward the detected race/gender/age.

Face detection is also being researched in the area of energy conservation. Televisions and computers can save energy by reducing the brightness. People tend to watch TV while doing other tasks and not focused 100% on the screen. The TV brightness stays the same level unless the user lowers it manually. The system can recognize the face direction of the TV user. When the user is not looking at the screen, the TV brightness is lowered. When the face returns to the screen, the brightness is increased.

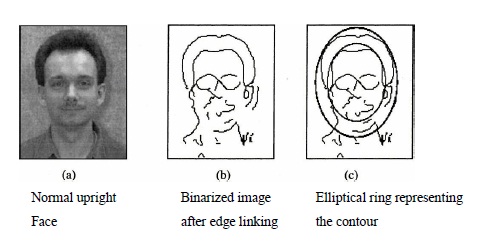

Here, we present an original template based on edge direction. It has been noticed that the contour of a human head can be approximated by an ellipse. This accords with our visual perception and has been verified by numerous experiments. The existing methods have not sufficiently used the global information of face images in which edge direction is a crucial part, so we present a deformable template based on the edge information to match the face contour.

The face contour is of course not a perfect ellipse. To achieve good performance, the template must tolerate some deviations.

In this paper, an elliptical ring is used as the template as illustrated in Fig. Fig. 2.1.5(a) is a normal upright face; 2.1.5(b) is the binary image after edge linking. In 2.1.5(b), we can note that the external contour cannot be represented by a single ellipse no matter how the parameters are adjusted. However, if we use an elliptical ring representing the contour as shown in Fig. 2.1.5(c), almost all the edge points on the contour can be included. The other important advantage of this template is that we can choose a relatively big step in matching so as to reduce the computational cost.

We follow a few steps for achieving our goal. First of all we take an input image which contains a single face in it. On the given input image we apply the sobel operator for detecting the edge in the image. Then we apply a threshold value to the image to binarize the image. The edge which we get after applying the sobel operator is thick. We apply a thinning algorithm to thin the edge which is discussed later. After thinning We try to eliminate the noise present in the image. We apply edge linking to the nearest points. Lastly we apply template matching to get the face from the image which is of the elliptical shape. The steps are discussed in detail below.

We take as input a image file which is of the pgm format. Then we allocate memory dynamically for a two dimensional array in a file. Copy pixel by pixel from the original image to the file created.

Application of Sobel operator for detecting the edge in the image. The Sobel operator is used in image processing, particularly within edge detection algorithms. Technically, it is a discrete differentiation operator, computing an approximation of the opposite of the gradient of the image intensity function. At each point in the image, the result of the Sobel operator is either the corresponding opposite of the gradient vector or the norm of this vector. The Sobel operator is based on convolving the image with a small, separable, and integer valued filter in horizontal and vertical direction and is therefore relatively inexpensive in terms of computations. On the other hand, the opposite of the gradient approximation that it produces is relatively crude, in particular for high frequency variations in the image.

In simple terms, the operator calculates the opposite of the gradient of the image intensity at each point, giving the direction of the largest possible change from light to dark and the rate of change in that direction. The result therefore shows how "abruptly" or "smoothly" the image changes at that point, and therefore how likely it is that that part of the image represents an edge, as well as how that edge is likely to be oriented. In practice, the magnitude (likelihood of an edge) calculation is more reliable and easier to interpret than the direction calculation. Mathematically, the gradient of a two-variable function (here the image intensity function) is at each image point a 2D vector with the components given by the derivatives in the horizontal and vertical directions. At each image point, the gradient vector points in the direction of largest possible intensity increase, and the length of the gradient vector corresponds to the rate of change in that direction. This implies that the result of the Sobel operator at an image point which is in a region of constant image intensity is a zero vector and at a point on an edge is a vector which points across the edge, from brighter to darker values.

Thresholding is the simplest method of image segmentation. From a grayscale image, thresholding can be used to create binary images. During the thresholding process, individual pixels in an image are marked as "object" pixels if their value is greater than some threshold value (assuming an object to be brighter than the background) and as "background" pixels otherwise. This convention is known as threshold above. Variants include threshold below, which is opposite of threshold above; threshold inside, where a pixel is labeled "object" if its value is between two thresholds; and threshold outside, which is the opposite of threshold inside. Typically, an object pixel is given a value of ―1‖ while a background pixel is given a value of ―0.‖ Finally, a binary image is created by coloring each pixel white or black, depending on a pixel's labels. We have taken the threshold value as 55. Above this value, we make all pixels 255 and below the threshold value we make all pixels values 0.

We have demonstrated the effectiveness of a new face detection algorithm in the images with simple. The algorithm is able to correctly detect all faces in the images with simple backgrounds. We are planning to test the method over a larger set of images; also over images, that don't contain faces. Such tests should help to detect weak points of the algorithm and consequently give directions for algorithm upgrade. We intend to extend the algorithm for multiface detection. First, the number of faces should be known before the detection. Second, we assume that faces do not overlap each other in images.

The hardware and software requirements for the development phase of our project are:

OPERATING SYSTEM : Windows 07/ XP Professional

FRONT END : Visual Studio 2010

BACK END : SQL SERVER 2005

PROCESSOR : PENTIUM IV 2.6 GHz, Intel Core 2 Duo.

RAM : 512 MB DD RAM

HARD DISK : 40 GB

KEYBOARD : STANDARD 102 KEY

MONITOR : 15” COLOUR

CD DRIVE : LG 52x