Published on Nov 30, 2023

The scope of this project is to develop a 3D graphics library for embedded systems like Mobile. The library is created for the basic API’s that will be able to render a 3D moving object into the screen. These sub-sets of API contain the basic operations that are necessary for rendering 3D pictures, games, and movies. The API’s are implemented based on the OpenGLES 1.1 specification

The activities carried out include Study of OpenGL based on the specifications, study and comparison of OpenGLES with OpenGL, understanding and analysis of the State Variables that depict the various states in the functionality, which is to be added, new architecture & design proposal, design for new architecture & Data Structures, identification of sub-set of APIs, implementation, testing.

The API’s to be implemented were given priority based on the functionality of the API towards rendering the object into the screen, adding color and lighting effects to the object, storing the coordinates of the object. And finally the movement of the object such as the rotation of the object through an angle in the screen, translating the object in the screen thro a distance and scaling which determines the position in which the object exists in the screen based on the depth buffer values.

The coordinates corresponding to the object and the color coordinates corresponding to the RGBA values are stored and then rendered using various state variables.

OpenGL is a software interface to graphics hardware. This interface consists of about 150 distinct commands that you use to specify the objects and operations needed to produce interactive threedimensional applications. OpenGL is designed as a streamlined, hardware-independent interface to be implemented on many different hardware platforms.

To achieve these qualities, no commands for performing windowing tasks or obtaining user input are included in OpenGL. OpenGL doesn’t provide high-level commands for describing models of three-dimensional objects. These commands might allow you to specify relatively complicated shapes such as automobiles, parts of the body, airplanes, or molecules. With OpenGL, we can build up our desired model from a small set of geometric primitives - points, lines, and polygons.

A sophisticated library that provides these features could certainly be built on top of OpenGL. The OpenGL Utility Library (GLU) provides many of the modeling features, such as quadric surfaces and NURBS curves and surfaces. GLU is a standard part of every OpenGL implementation. Also, there is a higher-level, object-oriented toolkit, Open Inventor, which is built atop OpenGL, and is available separately for many implementations of OpenGL.

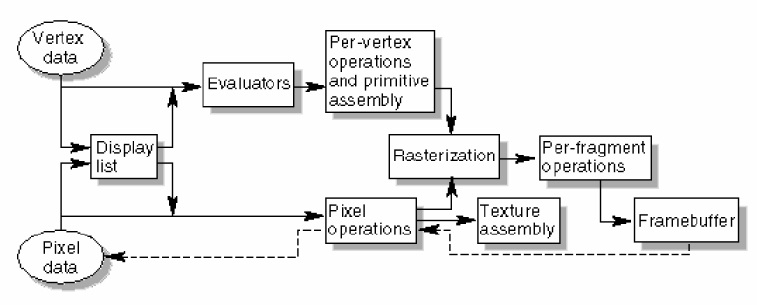

Most implementations of OpenGL have a similar order of operations, a series of processing stages called the OpenGL rendering pipeline. This ordering is shown in the following figure that describes how OpenGL is implemented and provides a reliable guide for predicting what OpenGL will do.

The following diagram shows the Henry Ford assembly line approach, which OpenGL takes to processing data. Geometric data (vertices, lines, and polygons) follow the path through the row of boxes that includes evaluators and per-vertex operations, while pixel data (pixels, images, and bitmaps) are treated differently for part of the process. Both types of data undergo the same final steps (Rasterization and per-fragment operations) before the final pixel data is written into the frame buffer.

• OpenGL is concerned only with rendering into a framebuffer (and reading values stored in that framebuffer).

• There is no support for other peripherals sometimes associated with graphics hardware, such as mice and keyboards.

• Programmers must rely on other mechanisms to obtain user input. • The GL draws primitives subject to a number of selectable modes. Each primitive is a point, line segment, polygon, or pixel rectangle. Each mode may be changed independently; the setting of one does not affect the settings of others (although many modes may interact to determine what eventually ends up in the framebuffer).

• Modes are set, primitives specified, and other GL operations described by sending commands in the form of function or procedure calls.

• Primitives are defined by a group of one or more vertices. A vertex defines a point, an endpoint of an edge, or a corner of a polygon where two edges meet. Data (consisting of positional coordinates, colors, normals, and texture coordinates) are associated with a vertex and each vertex is processed independently, in order, and in the same way. The only exception to this rule is if the group of vertices must be clipped so that the indicated primitive fits within a specified region; in this case vertex data may be modified and new vertices created. The type of clipping depends on which primitive the group of vertices represents.

• Commands are always processed in the order in which they are received, although there may be an indeterminate delay before the effects of a command are realized. This means, for example, that one primitive must be drawn completely before any subsequent one can affect the framebuffer. It also means that queries and pixel read operations return state consistent with complete execution of all previously invoked GL commands, except where explicitly specified otherwise.