Published on Apr 02, 2024

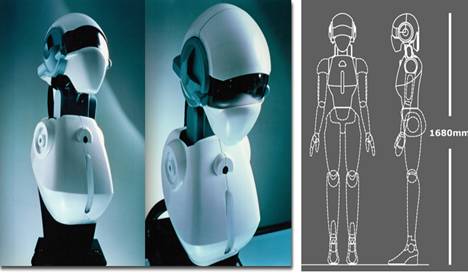

A very important aspect in developing robots capable of Human-Robot Interaction (HRI) is the research in natural, human-like communication, and subsequently, the development of a research platform with multiple HRI capabilities for evaluation.

Besides a flexible dialog system and speech understanding, an anthropomorphic appearance has the potential to support intuitive usage and understanding of a robot, e.g .. human-like facial expressions and deictic gestures can as well be produced and also understood by the robot. As a consequence of our effort in creating an anthropomorphic appearance and to come close to a human-human interaction model for a robot, we decided to use human-like sensors, i.e., two cameras and two microphones only, in analogy to human perceptual capabilities too.

Despite the challenges resulting from these limits with respect to perception, a robust attention system for tracking and interacting with multiple persons simultaneously in real time is presented. The tracking approach is sufficiently generic to work on robots with varying hardware, as long as stereo audio data and images of a video camera are available. To easily implement different interaction capabilities like deictic gestures, natural adaptive dialogs, and emotion awareness on the robot, we apply a modular integration approach utilizing XML-based data exchange. The paper focuses on our efforts to bring together different interaction concepts and perception capabilities integrated on a humanoid robot to achieve comprehending human-oriented interaction.

For face detection, a method originally developed by Viola and Jones for object detection is adopted. Their approach uses a cascade of simple rectangular features that allows a very efficient binary classification of image windows into either the face or non face class. This classification step is executed for different window positions and different scales to scan the complete image for faces. We apply the idea of a classification pyramid starting with very fast but weak classifiers to reject image parts that are certainly no faces. With increasing complexity of classifiers, the number of remaining image parts decreases. The training of the classifiers is based on the AdaBoost algorithm . Combining the weak classifiers iteratively to more stronger ones until the desired level of quality is achieved.

As an extension to the frontal view detection proposed by Viola and Jones, we additionally classify the horizontal gazing direction of faces, as shown in Fig. 4, by using four instances of the classifier pyramids described earlier, trained for faces rotated by 20", 40", 60", and 80". For classifying left and right-turned faces, the image is mirrored at its vertical axis, and the same four classifiers are applied again.

The gazing direction is evaluated for activating or deactivating the speech processing, since the robot should not react to people talking to each other in front of the robot, but only to communication partners facing the robot. Subsequent to the face detection, a face identification is applied to the detected image region using the eigenface method to compare the detected face with a set of trained faces. For each detected face, the size, center coordinates, horizontal rotation, and results of the face identification are provided at a real-time capable frequency of about 7 Hz on an Athlon64 2 GHz desktop PC with I GB RAM.

As mentioned before, the limited field-of-view of the cameras demands for alternative detect ion and tracking methods. Motivated by human perception, sound location is applied to direct the robot's attention. The integrated speaker localization (SPLOC) realizes both the detection of possible communication partners outside the field-of-view of the camera and the estimation whether a person found by face detection is currently speaking. The program continuously captures the audio data by the two microphones.

To estimate the relative direction of one or more sound sources in front of the robot, the direction of sound toward the microphones is considered . Dependent on the position of a sound source in front of the robot, the run time difference t results from the run times tr and tl of the right and left microphone. SPLOC compares the recorded audio signal of the left and the right] microphone using a fixed number of samples for a cross power spectrum phase (CSP) to calculate the temporal shift between the signals. Taking the distance of the microphones dmic and a minimum range of 30 cm to a sound source into account, it is possible to estimate the direction of a signal in a 2-D space. For multiple sound source detection, not only the main energy value for the CSP result is taken, but also all values exceeding an adjustable threshold.

In the 3-D space, distance and height of a sound source is needed for an exact detection.

This information can be obtained by the face detection when SPLOC is used for checking whether a found person is speaking or not. For coarsely detecting communication partner, outside the field-of-view, standard values are used that are sufficiently accurate to align the camera properly to get the person hypothesis into the field-of-view. The position of a sound source (a speaker mouth) is assumed at a height of 160 Cm for an average adult. The standard distance is adjusted to 110 Cm, as observed during interactions with naive users.

| Are you interested in this topic.Then mail to us immediately to get the full report.

email :- contactv2@gmail.com |