Published on Feb 21, 2020

The term bio-inspired has been introduced to demonstrate the strong relation between a particular system or algorithm, which has been proposed to solve a specific problem, and a biological system, which follows a similar procedure or has similar capabilities.

In the last 15 years, we have witnessed unprecedented growth of the Internet. Moreover, the Internet continues to evolve at a rapid pace in order to utilize the latest technological advances and meet new usage demands. It has been a great research challenge to find an effective means to influence its future and to address a number of important issues facing the Internet today, such as overall system security, routing scalability, effective mobility support for large numbers of moving components and the various demands put on the network by the ever-increasing number of new applications and devices

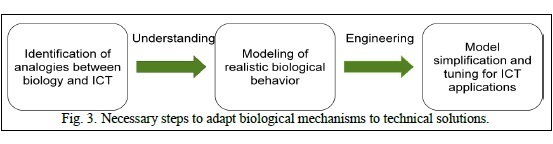

Many methods and techniques are really bio-inspired as they follow principles that have been studied in nature and that promise positive effects if applied to technical systems. Three steps can be identified that are always necessary for developing bio-inspired methods:

Identification of analogies – which structures and methods seem to be similar,

Understanding – detailed modeling of realistic biological behavior,

Engineering – model simplification and tuning for technical applications

A complicated, strictly organized internal structure is necessary for any system to exhibit robust external behavior. That is, there is an inherent trade-off between structural simplicity and robustness. Both the human body and the Internet have a complex, strictly organized internal structure. The human body has many different organs and physiological systems, each of which serves a specific purpose.

A kidney cannot serve as a lung nor vice versa. The Internet also contains a number of specialized devices. At its core are high-speed routers, which single-mindedly forward data in a highly optimized manner. At the edges of the network are a diverse array of application-oriented devices, such as laptop computers and cellular phones. A high-speed router would be no more helpful in reading our e-mail as a kidney would be no more helpful in oxygenating our blood.

Also, complex systems are only optimized to be robust against expected failures or perturbations. The system becomes quite fragile in regards to unexpected or rare failures or perturbations. For example, humans are quite robust to the sorts of changes we have evolved to tolerate – we live in climates from the Arctic to the Sahara desert, we can obtain energy from any number of different food sources, and can even survive the loss of a limb.

However, a small change to an important gene or exposure to trace levels of an unusual toxin can cause massive systemic failures. The Internet was optimized for robustness to physical failures of individual components. However, it is fragile to even small soft failures, such as an error in the design of a protocol (as occurred in the early days of the ARPAnet) or a single component that breaks the rules (as with prefix hijacking).

In both biological and networked systems, far simpler designs exist, but these simpler systems lack any resiliency to even the most common failures. Bacteria are composed of only a single cell, rather than a complex, structured network of cells like a human, but can only tolerate very small changes in their external environment, such as a slight change in temperature or pH. Building a network with a star topology makes many of the most difficult design challenges in the Internet, such as packet routing and addressing, trivially simple. However, a network with a star topology is rendered completely useless by the failure of the single central node

Swarm Intelligence is the property of a system whereby the collective behaviors of agents interacting locally with their environment cause coherent functional global patterns to emerge. Individual insects function much like simple computing devices – they execute simple procedures based on their input, causing them to produce some output. At any given moment, an individual insect is merely reacting to stimuli in its immediate surroundings. Largescale, seemingly global cooperation emerges as a result of two phenomena.

First, each species is genetically pre-programmed to perform an identical set of procedures given the same set of stimuli. Second, by performing these procedures, creatures implicitly modify their environment (of which they are one feature), creating new stimuli for themselves and those around them. This phenomenon of indirect communication via changes to the environment is called stigmergy

| Are you interested in this topic.Then mail to us immediately to get the full report.

email :- contactv2@gmail.com |